Table of Contents

1 Update on reflections from Bob Haining's Lecture

Earlier this year Prof Bob Haining from the Geography Department Cambridge visited and gave us a great lecture on spatial regression.

This Tuesday at the GIS Forum we were lucky to be joined by statistician Phil Kokic from CSIRO who had heard we'd be discussing spatial autocorrelation (Phil is my PhD supervisor). Here are some quick notes I made:

1.1 CART Tree analysis that addresses the (potential)spatial autocorrelation problem

We started off the discussion with an assessment of the approach described in this post Classification Trees and Spatial Autocorrelation.

I've been thinking more and more about decision trees/CART/random forest methods for selecting a subset of relevant variables (and interations) for use in GLM or GAM model construction. In a perfect world I'd have data on the main predictor I wanted to model and enough data about all the relevant other predictors (especially confounding or modifying variables) to ensure I get a 'well behaved model'. But with all the data around and so many potentially plausible relationships one might choose to include we need a way to narrow down these to just include the most important covariates, confounders and interactions. CART or some variation on it seems a good way to do this, but is prone to the potential problem of spatially correlated errors too.

The idea from that blog post is:

"compute the classification tree, calculate residuals and use it for a Mantel-test and Mantel correlograms. The Mantel correlograms test differrences in dissimilarities of the residuals across several spatial distances and thus enable you to detect lag-distances where possible spatial autocorrelation vanishes. …If encounter autocorrelation… try to use subsamples of the data avoiding resampling within the lag-distance.."

I think the workflow would be to

- fit the classification tree (Question: best to use all the data or with a sample like using cross-validation)

- get the residuals and visually assess the lagged distances plot provided by the Mantel correlogram. Decide on a threshold (Question: is there an objective way to do this?).

- Sample from the data and select out from this sample only data from pairs with distances greater than the threshold (have to keep one out of each close pair or else we'd only be getting data from the sparsely sampled parts of our study region).

We all agreed this sounded OK, but only avoids the problem of spatial autocorrelation (and loses data).

1.2 Modeling with control for spatial autocorrelation

So we all agreed we'd prefer if our model can control for spatial autocorrelation. I confessed that I'd always found the GeoBUGS tutorial and other tutorials about Bayesian methods for this very difficult and would really like a "Simple" way to make the problem go away. So first we briefly reviewed Prof Hainings 3 equations again:

NOTE: THE FOLLOWING IDEAS WORK BEST FOR AREAL DATA.

1.3 The Spatial Error Model

Where:

\(\eta_{i}\) = Spatially autocorrelated errors.

1.4 The Spatial Lag Model

Where:

\(\rho_(Neighbours Y_{ij})\) = is an additional explanatory variable which is the value of the dependent variable in neighbouring areas.

1.5 Spatially Lagged Independent Variable(s)

Where:

\(\beta_{2L} X_{2ij}\) = is the independent variable X2 that is spatially lagged.

1.6 Discussion

- Phil agreed with Bob that the spatial error model is the best, spatial lag model is OK and spatially lagged covariates not so great.

- For spatial error model fitting Phil suggested looking at R packages spBayes and spTimer.

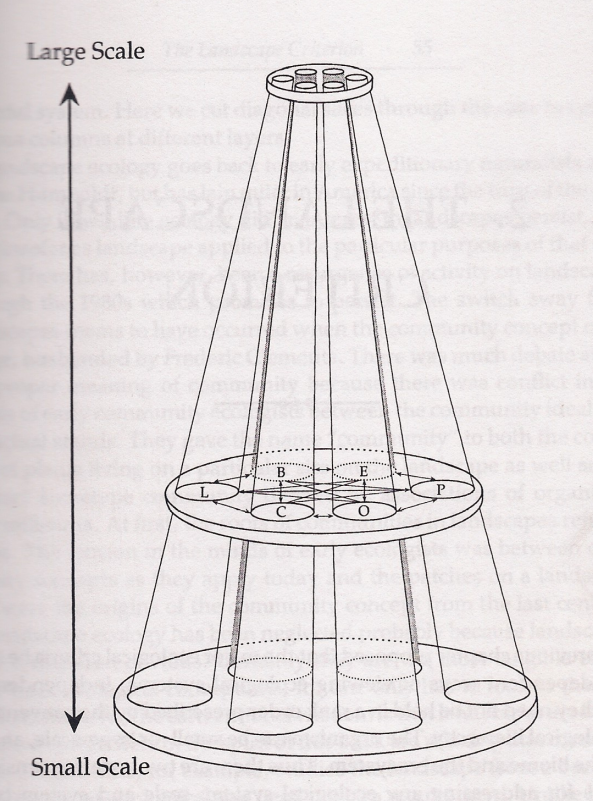

- I pointed out that I am mostly interested in "spatially structured time-series models" rather than spatial models at a single point in time. By this I mean that we have several neighbouring areal units observed over a period of time. In this framework the general methods of time series modelling are used to control for temporal autocorrelation. However this makes the methods of spatial error and spatial lag models tricky because the spatial autocorrelation needs to be assessed at many points in time.

- I asked that if spatial lag is OK (and it seems easier to fit into the time-series model framework) how can I check to know if it has done the trick? If this were purely a spatial model we could check for spatial autocorrelation in the residuals just as they described in the CART blog above, but here we have many maps we could make (one every time point), and our spatial autocorrelation measure would surely vary a lot over time. SO would a simple way just be to asses the effect on the Standard Error on beta1 (our primary interest) and if it is bigger but still significant we can be reassured that our result isn't affected? Or perhaps we should assess the beta on the lagged variable, for instance is a significant p-value on the lagged Beta an indication that it is capturing the unmeasured spatial associations represented by the neighbourhood variable?

- If it hadn't done the trick Nerida pointed out this might be because the Neighbourhoods are actually not appropriately represented by the first order neighbours and therefore more neighbours could be included, like moving out several concentric circles to wider and wider neighbourhoods

- Nasser and Phil pointed out that the lagged variable (the outcome in the neighbours) includes an element of the exposure variables, and said that it would be difficult to 'unpack' what that part of the model meant.

- so it looks like there is no simple answer and spatial error model is still preferred.

</html>